NTFS tricks and the full c: disk

In the last week or so I’ve hit a point on my workstation where booting and running everyday apps has just slowed to an unbearable crawl. This happened to me before but I switch machines enough that I usually avoid the issue by, well, switching machines.

This time I think I have to actually stop and fix it — because I won’t have a new machine for another week or so.

Dang. Forced learning.

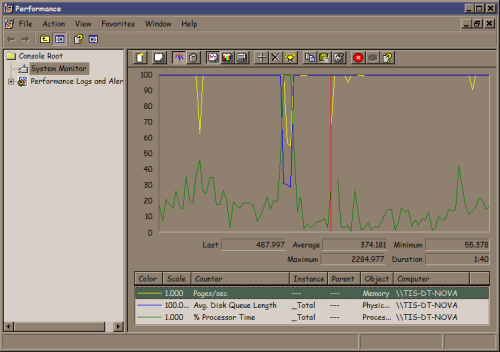

Using perfmon it is clear the disk is thrashing. Note the top two counters, pages/sec (yellow) and Average disk access queue (blue) are pegged but CPU (green) is mild.

This is with the machine basically idle. I have the usual suite of things like Google Desktop and Tortiose SVN running so I’m not shocked things are accessing the disk but the machine is almost unusable and it was running fine about a month ago. No, I checked, I don’t have a virus. In my life the problem has never been “it’s a virus.” It has always been “I did something stupid.” I guess viruses also fall into that I-did-something-stupid bin …

I’m sure some of you are ahead of the story and saying “has he checked his disk space?” Turns out I have almost 21% free space on a 320 GB drive. Is that a problem?

Yes.

This is a Windows XP system with NTFS on the C: drive. I actually have couple more 320 GB drives on this machine but they’re basically empty. Why are they empty? Dumb reasons. I have a bunch of alpha and beta quality projects going, each of which has all kinds of massive data sets and each of which the developers insist “install it on the C: drive — it doesn’t work quite right in other locations.” Sigh. They are mostly not my developers so I can’t explain (or yell) to them HOW STUPID IT IS TO WELD YOUR APP TO THE C: DRIVE.

But why is an 80% full NTFS partition a problem? When I started this I actually did not know why but I’ve known for years that NTFS disk performance goes to crap once you get north of 70% full or so — but why? I found some of the best information on this topic on this page by Mitch Tulloch at O’Reilly Windows Devcenter.

Based on Mitch’s descriptions, my best theory is that it’s a combination of the Master File Table (MFT) getting fragmented as well as space needed for the pagefile. This hints at two fixes: move the paging file and clear some disk space.

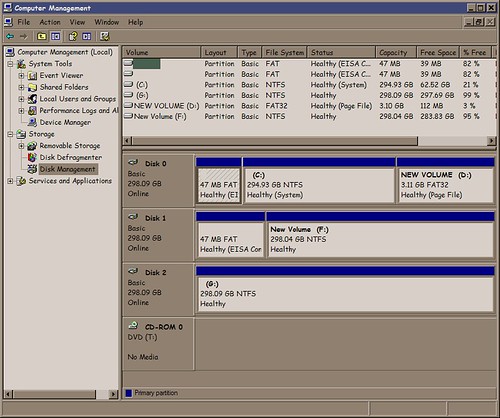

Getting into the disk management applet,

I saw that Dell helpfully left me a 3.11 GB partition that was unused so I formatted it FAT32, declared a 3067 MB page file there, and removed the one on the C: drive. Note: Ideally the pagefile partition would be on a physically separate disk but I’m working with what I have.

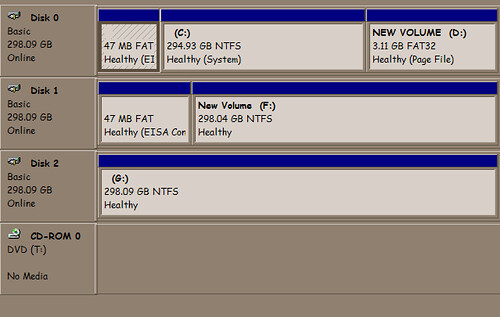

After a reboot I have:

Performance is much better — the machine now thrashes for about 3 minutes after boot and login as opposed to 15+ (!) minutes before moving the pagefile location.

However, I’m at 79% full (down from 82%) on the C: drive so I’m still in serious risk of MFT fragmentation so let’s clean up the disk.

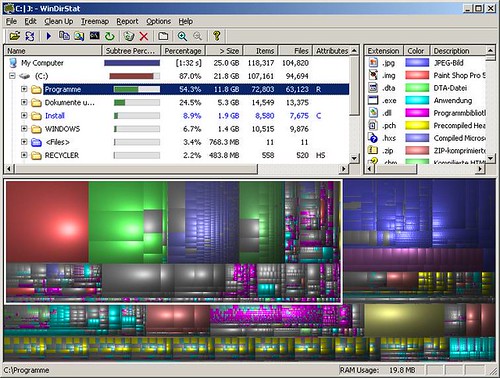

Gina Trapani wrote this very helpful post about using WinDirStat to see what’s using space on your disk.

WinDirStat is a really cool tool! I’ve learned about (and subsequently forgotten) this tool several times. I have on the order of 1,000,000 files on this system so the graphical tool to help me home in on the disk hogs is really helpful.

After some quality time marvelling at all the cruft I had accumulated on my machine (why did I have two cygwin installations? Why did I have one? ) I moved or deleted about 60 GB of stuff and got to around 38% free and the machine is running much better now.

The scary thing is that I absolutely “need” the 180 GB in use now. It was only a few years ago that 30 GB drives were ok…